ABSTRACT

H.E. Bal

Vrije Universiteit

Wiskundig Seminarium, Amsterdam

The EM Global Optimizer is part of the Amsterdam Compiler Kit, a toolkit for making retargetable compilers. It optimizes the intermediate code common to all compilers of the toolkit (EM), so it can be used for all programming languages and all processors supported by the kit.

The optimizer is based on well-understood concepts like control flow analysis and data flow analysis. It performs the following optimizations: Inline Substitution, Strength Reduction, Common Subexpression Elimination, Stack Pollution, Cross Jumping, Branch Optimization, Copy Propagation, Constant Propagation, Dead Code Elimination and Register Allocation.

This report describes the design of the optimizer and several of its implementation issues.

The EM Global Optimizer is part of a software toolkit for making production-quality retargetable compilers. This toolkit, called the Amsterdam Compiler Kit [Tane81a, Tane83b] runs under the Unix*

operating system.

The main design philosophy of the toolkit is to use a

language- and machine-independent intermediate code, called

EM. [Tane83a] The basic compilation process can be split up

into two parts. A language-specific front end translates the

source program into EM. A machine-specific back end

transforms EM to assembly code of the target machine.

The global optimizer is an optional phase of the compilation process, and can be used to obtain machine code of a higher quality. The optimizer transforms EM-code to better EM-code, so it comes between the front end and the back end. It can be used with any combination of languages and machines, as far as they are supported by the compiler kit.

This report describes the design of the global optimizer and several of its implementation issues. Measurements can be found in. [Bal86a]

The EM Global Optimizer is one of three optimizers that are part of the Amsterdam Compiler Kit (ACK). The phases of ACK are:

|

1. |

A Front End translates a source program to EM |

|

2. |

The Peephole Optimizer [a] reads EM code and produces ’better’ EM code. It performs a number of optimizations (mostly peephole optimizations) such as constant folding, strength reduction and unreachable code elimination. |

|

3. |

The Global Optimizer further improves the EM code. |

|

4. |

The Code Generator transforms EM to assembly code of the target computer. |

|

5. |

The Target Optimizer improves the assembly code. |

|

6. |

An Assembler/Loader generates an executable file. |

For a more extensive overview of the ACK compilation process, we refer to. [Tane81a, Tane83b]

The input of the Global Optimizer may consist of files and libraries. Every file or module in the library must contain EM code in Compact Assembly Language format. [Tane83a, section 11.2] The output consists of one such EM file. The input files and libraries together need not constitute an entire program, although as much of the program as possible should be supplied. The more information about the program the optimizer gets, the better its output code will be.

The Global Optimizer is language- and machine-independent, i.e. it can be used for all languages and machines supported by ACK. Yet, it puts some unavoidable restrictions on the EM code produced by the Front End (see below). It must have some knowledge of the target machine. This knowledge is expressed in a machine description table which is passed as argument to the optimizer. This table does not contain very detailed information about the target (such as its instruction set and addressing modes).

The definition of EM, the intermediate code of all ACK compilers, is given in a separate document. [Tane83a] We will only discuss some features of EM that are most relevant to the Global Optimizer.

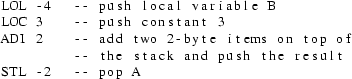

EM is the assembly code of a virtual stack machine. All operations are performed on the top of the stack. For example, the statement "A := B + 3" may be expressed in EM as:

So EM is essentially a postfix code.

EM has a rich instruction set, containing several arithmetic and logical operators. It also contains special-case instructions (such as INCrement).

EM has global (external) variables, accessible by all procedures and local variables, accessible by a few (nested) procedures. The local variables of a lexically enclosing procedure may be accessed via a static link. EM has instructions to follow the static chain. There are EM instruction to allow a procedure to access its local variables directly (such as LOL and STL above). Local variables are referenced via an offset in the stack frame of the procedure, rather than by their names (e.g. -2 and -4 above). The EM code does not contain the (source language) type of the variables.

All structured statements in the source program are expressed in low level jump instructions. Besides conditional and unconditional branch instructions, there are two case instructions (CSA and CSB), to allow efficient translation of case statements.

As the optimizer should be useful for all languages, it clearly should not put severe restrictions on the EM code of the input. There is, however, one immovable requirement: it must be possible to determine the flow of control of the input program. As virtually all global optimizations are based on control flow information, the optimizer would be totally powerless without it. For this reason we restrict the usage of the case jump instructions (CSA/CSB) of EM. Such an instruction is always called with the address of a case descriptor on top the the stack. [Tane83a section 7.4] This descriptor contains the labels of all possible destinations of the jump. We demand that all case descriptors are allocated in a global data fragment of type ROM, i.e. the case descriptors may not be modifyable. Furthermore, any case instruction should be immediately preceded by a LAE (Load Address External) instruction, that loads the address of the descriptor, so the descriptor can be uniquely identified.

The optimizer will work improperly if the user deceives the control flow. We will give two methods to do this.

In "C" the notorious library routines "setjmp" and "longjmp" [Kern79a] may be used to jump out of a procedure, but can also be used for a number of other stuffy purposes, for example, to create an extra entry point in a loop.

while (condition) {

}

...

longjmp(buf);

The invocation to longjmp actually is a jump to the place of the last call to setjmp with the same argument (buf). As the calls to setjmp and longjmp are indistinguishable from normal procedure calls, the optimizer will not see the danger. No need to say that several loop optimizations will behave unexpectedly when presented with such pathological input.

Another way to deceive the flow of control is by using exception handling routines. Ada*

has clearly recognized the dangers of exception handling, but other languages (such as PL/I) have not. [Ichb79a]

The optimizer will be more effective if the EM input contains some extra information about the source program. Especially the register message is very important. These messages indicate which local variables may never be accessed indirectly. Most optimizations benefit significantly by this information.

The Inline Substitution technique needs to know how many bytes of formal parameters every procedure accesses. Only calls to procedures for which the EM code contains this information will be substituted in line.

The Global Optimizer is organized as a number of phases, each one performing some task. The main structure is as follows:

|

IC |

the Intermediate Code construction phase transforms EM into the intermediate code (ic) of the optimizer |

|

CF |

the Control Flow phase extends the ic with control flow information and interprocedural information |

|

OPTs |

zero or more optimization phases, each one performing one or more related optimizations |

|

CA |

the Compact Assembly phase generates Compact Assembly Language EM code out of ic. |

An important issue in the design of a global optimizer is the interaction between optimization techniques. It is often advantageous to combine several techniques in one algorithm that takes into account all interactions between them. Ideally, one single algorithm should be developed that does all optimizations simultaneously and deals with all possible interactions. In practice, such an algorithm is still far out of reach. Instead some rather ad hoc (albeit important) combinations are chosen, such as Common Subexpression Elimination and Register Allocation. [Prab80a, Seth70a]

In the Em Global Optimizer there is one separate algorithm for every technique. Note that this does not mean that all techniques are independent of each other.

In principle, the optimization phases can be run in any order; a phase may even be run more than once. However, the following rules should be obeyed:

|

- |

the Live Variable analysis phase (LV) must be run prior to Register Allocation (RA), as RA uses information outputted by LV. |

|

- |

RA should be the last phase; this is a consequence of the way the interface between RA and the Code Generator is defined. |

The ordering of the phases has significant impact on the quality of the produced code. In [Leve79a] two kinds of phase ordering problems are distinguished. If two techniques A and B both take away opportunities of each other, there is a "negative" ordering problem. If, on the other hand, both A and B introduce new optimization opportunities for each other, the problem is called "positive". In the Global Optimizer the following interactions must be taken into account:

|

- |

Inline Substitution (IL) may create new opportunities for most other techniques, so it should be run as early as possible |

|

- |

Use Definition analysis (UD) may introduce opportunities for LV. |

|

- |

Strength Reduction may create opportunities for UD |

The optimizer has a default phase ordering, which can be changed by the user.

The remaining chapters of this document each describe one phase of the optimizer. For every phase, we describe its task, its design, its implementation, and its source files. The latter two sections are intended to aid the maintenance of the optimizer and can be skipped by the initial reader.

There are very few modern textbooks on optimization. Chapters 12, 13, and 14 of [Aho78a] are a good introduction to the subject. Wulf et. al. [Wulf75a] describe one specific optimizing (Bliss) compiler. Anklam et. al. [Ankl82a] discuss code generation and optimization in compilers for one specific machine (a Vax-11). Kirchgaesner et. al. [Kirc83a] present a brief description of many optimizations; the report also contains a lengthy (over 60 pages) bibliography.

The number of articles on optimization is quite impressive. The Lowry and Medlock paper on the Fortran H compiler [Lowr69a] is a classical one. Other papers on global optimization are. [Faim80a, Perk79a, Harr79a, More79a, Mint79a] Freudenberger [Freu83a] describes an optimizer for a Very High Level Language (SETL). The Production-Quality Compiler-Compiler (PQCC) project uses very sophisticated compiler techniques, as described in. [Leve80a, Leve79a, Wulf80a]

Several Ph.D. theses are dedicated to optimization. Davidson [Davi81a] outlines a machine-independent peephole optimizer that improves assembly code. Katkus [Katk73a] describes how efficient programs can be obtained at little cost by optimizing only a small part of a program. Photopoulos [Phot81a] discusses the idea of generating interpreted intermediate code as well as assembly code, to obtain programs that are both small and fast. Shaffer [Shaf78a] describes the theory of automatic subroutine generation. Leverett [Leve81a] deals with register allocation in the PQCC compilers.

References to articles about specific optimization techniques will be given in later chapters.

In this chapter the intermediate code of the EM global optimizer will be defined. The ’Intermediate Code construction’ phase (IC), which builds the initial intermediate code from EM Compact Assembly Language, will be described.

The EM global optimizer is a multi pass program, hence there is a need for an intermediate code. Usually, programs in the Amsterdam Compiler Kit use the Compact Assembly Language format [Tane83a, section 11.2] for this purpose. Although this code has some convenient features, such as being compact, it is quite unsuitable in our case, because of a number of reasons. At first, the code lacks global information about whole procedures or whole basic blocks. Second, it uses identifiers (’names’) to bind defining and applied occurrences of procedures, data labels and instruction labels. Although this is usual in high level programming languages, it is awkward in an intermediate code that must be read many times. Each pass of the optimizer would have to incorporate an identifier look-up mechanism to associate a defining occurrence with each applied occurrence of an identifier. Finally, EM programs are used to declare blocks of bytes, rather than variables. A ’hol 6’ instruction may be used to declare three 2-byte variables. Clearly, the optimizer wants to deal with variables, and not with rows of bytes.

To overcome these problems, we have developed a new intermediate code. This code does not merely consist of the EM instructions, but also contains global information in the form of tables and graphs. Before describing the intermediate code we will first leap aside to outline the problems one generally encounters when trying to store complex data structures such as graphs outside the program, i.e. in a file. We trust this will enhance the comprehensibility of the intermediate code definition and the design and implementation of the IC phase.

Most programmers are quite used to deal with complex data structures, such as arrays, graphs and trees. There are some particular problems that occur when storing such a data structure in a sequential file. We call data that is kept in main memory internal ,as opposed to external data that is kept in a file outside the program.

We assume a simple data structure of a scalar type (integer, floating point number) has some known external representation. An array having elements of a scalar type can be represented externally easily, by successively representing its elements. The external representation may be preceded by a number, giving the length of the array. Now, consider a linear, singly linked list, the elements of which look like:

record

data: scalar_type;

next: pointer_type;

end;

It is significant to note that the "next" fields of the elements only have a meaning within main memory. The field contains the address of some location in main memory. If a list element is written to a file in some program, and read by another program, the element will be allocated at a different address in main memory. Hence this address value is completely useless outside the program.

One may represent the list by ignoring these "next" fields and storing the data items in the order they are linked. The "next" fields are represented implicitly. When the file is read again, the same list can be reconstructed. In order to know where the external representation of the list ends, it may be useful to put the length of the list in front of it.

Note that arrays and linear lists have the same external representation.

A doubly linked, linear list, with elements of the type:

record

data: scalar_type;

next,

previous: pointer_type;

end

can be represented in precisely the same way. Both the "next" and the "previous" fields are represented implicitly.

Next, consider a binary tree, the nodes of which have type:

record

data: scalar_type;

left,

right: pointer_type;

end

Such a tree can be represented sequentially, by storing its nodes in some fixed order, e.g. prefix order. A special null data item may be used to denote a missing left or right son. For example, let the scalar type be integer, and let the null item be 0. Then the tree of fig. 3.1(a) can be represented as in fig. 3.1(b).

4

/ \

9 12

/ \ / \

12 3 4 6

/ \ \ /

8 1 5 1

Fig. 3.1(a) A binary tree

4 9 12 0 0 3 8 0 0 1 0 0 12 4 0 5 0 0 6 1 0 0 0

Fig. 3.1(b) Its sequential representation

We are still able to represent the pointer fields ("left" and "right") implicitly.

Finally, consider a general graph , where each node has a "data" field and pointer fields, with no restriction on where they may point to. Now we’re at the end of our tale. There is no way to represent the pointers implicitly, like we did with lists and trees. In order to represent them explicitly, we use the following scheme. Every node gets an extra field, containing some unique number that identifies the node. We call this number its id. A pointer is represented externally as the id of the node it points to. When reading the file we use a table that maps an id to the address of its node. In general this table will not be completely filled in until we have read the entire external representation of the graph and allocated internal memory locations for every node. Hence we cannot reconstruct the graph in one scan. That is, there may be some pointers from node A to B, where B is placed after A in the sequential file than A. When we read the node of A we cannot map the id of B to the address of node B, as we have not yet allocated node B. We can overcome this problem if the size of every node is known in advance. In this case we can allocate memory for a node on first reference. Else, the mapping from id to pointer cannot be done while reading nodes. The mapping can be done either in an extra scan or at every reference to the node.

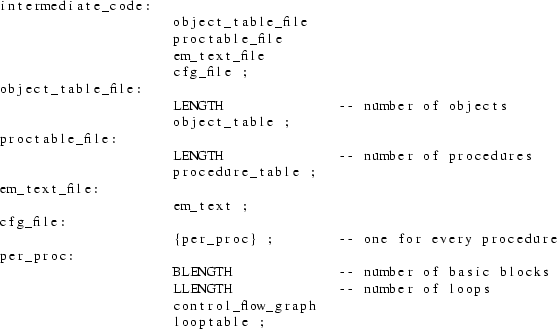

The intermediate code of the optimizer consists of several components:

|

- |

the object table |

|

- |

the procedure table |

|

- |

the em code |

|

- |

the control flow graphs |

|

- |

the loop table |

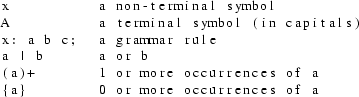

These components are described in the next sections. The syntactic structure of every component is described by a set of context free syntax rules, with the following conventions:

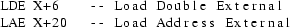

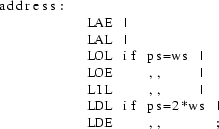

EM programs declare blocks of bytes rather than (global) variables. A typical program may declare ’HOL 7780’ to allocate space for 8 I/O buffers, 2 large arrays and 10 scalar variables. The optimizer wants to deal with objects like variables, buffers and arrays and certainly not with huge numbers of bytes. Therefore the intermediate code contains information about which global objects are used. This information can be obtained from an EM program by just looking at the operands of instruction such as LOE, LAE, LDE, STE, SDE, INE, DEE and ZRE.

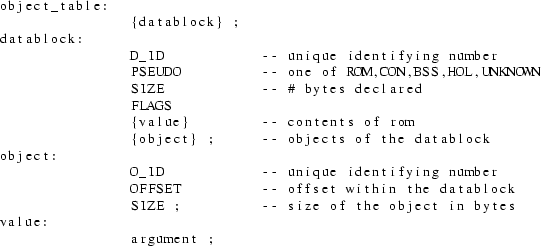

The object table consists of a list of datablock entries. Each such entry represents a declaration like HOL, BSS, CON or ROM. There are five kinds of datablock entries. The fifth kind, UNKNOWN, denotes a declaration in a separately compiled file that is not made available to the optimizer. Each datablock entry contains the type of the block, its size, and a description of the objects that belong to it. If it is a rom, it also contains a list of values given as arguments to the rom instruction, provided that this list contains only integer numbers. An object has an offset (within its datablock) and a size. The size need not always be determinable. Both datablock and object contain a unique identifying number (see previous section for their use).

syntax

A data block has only one flag: "external", indicating whether the data label is externally visible. The syntax for "argument" will be given later on (see em_text).

The procedure table contains global information about all procedures that are made available to the optimizer and that are needed by the EM program. (Library units may not be needed, see section 3.5). The table has one entry for every procedure.

syntax

The number of bytes of formal parameters accessed by a

procedure is determined by the front ends and passed via a

message (parameter message) to the optimizer. If the front

end is not able to determine this number (e.g. the parameter

may be an array of dynamic size or the procedure may have a

variable number of arguments) the attribute contains the

value ’UNKNOWN_SIZE’.

A procedure has the following flags:

|

- |

external: true if the proc. is externally visible |

|

- |

bodyseen: true if its code is available as EM text |

|

- |

calunknown: true if it calls a procedure that has its bodyseen flag not set |

|

- |

environ: true if it uses or changes a (non-global) variable in a lexically enclosing procedure |

|

- |

lpi: true if is used as operand of an lpi instruction, so it may be called indirect |

The change and use attributes both have one flag: "indirect", indicating whether the procedure does a ’use indirect’ or a ’store indirect’ (indirect means through a pointer).

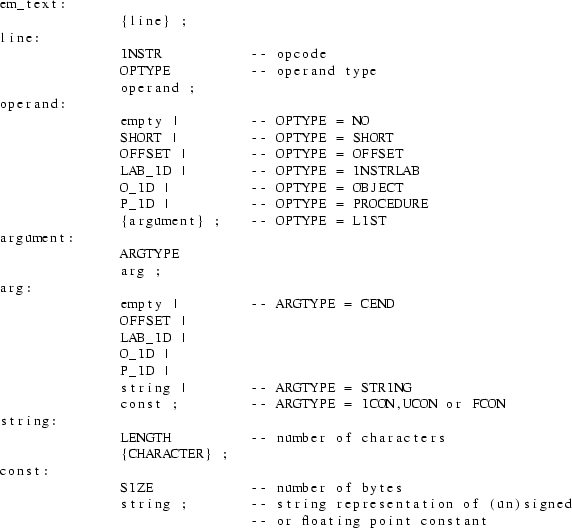

The EM text contains the EM instructions. Every EM instruction has an operation code (opcode) and 0 or 1 operands. EM pseudo instructions can have more than 1 operand. The opcode is just a small (8 bit) integer.

There are several kinds of operands, which we will refer to as types. Many EM instructions can have more than one type of operand. The types and their encodings in Compact Assembly Language are discussed extensively in. [Tane83a, section 11.2] Of special interest is the way numeric values are represented. Of prime importance is the machine independency of the representation. Ultimately, one could store every integer just as a string of the characters ’0’ to ’9’. As doing arithmetic on strings is awkward, Compact Assembly Language allows several alternatives. The main idea is to look at the value of the integer. Integers that fit in 16, 32 or 64 bits are represented as a row of resp. 2, 4 and 8 bytes, preceded by an indication of how many bytes are used. Longer integers are represented as strings; this is only allowed within pseudo instructions, however. This concept works very well for target machines with reasonable word sizes. At present, most ACK software cannot be used for word sizes higher than 32 bits, although the handles for using larger word sizes are present in the design of the EM code. In the intermediate code we essentially use the same ideas. We allow three representations of integers.

|

- |

integers that fit in a short are represented as a short |

|

- |

integers that fit in a long but not in a short are represented as longs |

|

- |

all remaining integers are represented as strings (only allowed in pseudos). |

The terms short and long are defined in [Ritc78a, section 4] and depend only on the source machine (i.e. the machine on which ACK runs), not on the target machines. For historical reasons a long will often be called an offset.

Operands can also be instruction labels, objects or procedures. Instruction labels are denoted by a label identifier, which can be distinguished from a normal identifier.

The operand of a pseudo instruction can be a list of arguments. Arguments can have the same type as operands, except for the type short, which is not used for arguments. Furthermore, an argument can be a string or a string representation of a signed integer, unsigned integer or floating point number. If the number of arguments is not fully determined by the pseudo instruction (e.g. a ROM pseudo can have any number of arguments), then the list is terminated by a special argument of type CEND.

syntax

Each procedure can be divided into a number of basic blocks. A basic block is a piece of code with no jumps in, except at the beginning, and no jumps out, except at the end.

Every basic block has a set of successors, which are basic blocks that can follow it immediately in the dynamic execution sequence. The predecessors are the basic blocks of which this one is a successor. The successor and predecessor attributes of all basic blocks of a single procedure are said to form the control flow graph of that procedure.

Another important attribute is the immediate dominator. A basic block B dominates a block C if every path in the graph from the procedure entry block to C goes through B. The immediate dominator of C is the closest dominator of C on any path from the entry block. (Note that the dominator relation is transitive, so the immediate dominator is well defined.)

A basic block also has an attribute containing the identifiers of every loop that the block belongs to (see next section for loops).

syntax

The flag bits can have the values ’firm’ and ’strong’, which are explained below.

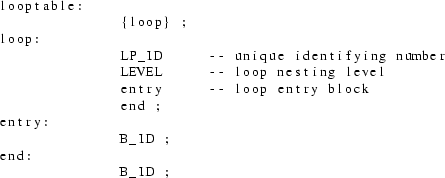

Every procedure has an associated loop table containing information about all the loops in the procedure. Loops can be detected by a close inspection of the control flow graph. The main idea is to look for two basic blocks, B and C, for which the following holds:

|

- |

B is a successor of C |

|

- |

B is a dominator of C |

B is called the loop entry and C is called the loop end. Intuitively, C contains a jump backwards to the beginning of the loop (B).

A loop L1 is said to be nested within loop L2 if all basic blocks of L1 are also part of L2. It is important to note that loops could originally be written as a well structured for -or while loop or as a messy goto loop. Hence loops may partly overlap without one being nested inside the other. The nesting level of a loop is the number of loops in which it is nested (so it is 0 for an outermost loop). The details of loop detection will be discussed later.

It is often desirable to know whether a basic block gets executed during every iteration of a loop. This leads to the following definitions:

|

- |

A basic block B of a loop L is said to be a firm block of L if B is executed on all successive iterations of L, with the only possible exception of the last iteration. |

|

- |

A basic block B of a loop L is said to be a strong block of L if B is executed on all successive iterations of L. |

Note that a strong block is also a firm block. If a block is part of a conditional statement, it is neither strong nor firm, as it may be skipped during some iterations (see Fig. 3.2).

loop

if cond1 then

|

-- result in a firm or strong block |

end if;

... -- strong (always executed)

exit when cond2;

... -- firm (not executed on last iteration).

end loop;

Fig. 3.2 Example of firm and strong block

syntax

The syntax of the intermediate code was given in the previous section. In this section we will make some remarks about the representation of the code in sequential files.

We use sequential files in order to avoid the bookkeeping of complex file indices. As a consequence of this decision we can’t store all components of the intermediate code in one file. If a phase wishes to change some attribute of a procedure, or wants to add or delete entire procedures (inline substitution may do the latter), the procedure table will only be fully updated after the entire EM text has been scanned. Yet, the next phase undoubtedly wants to read the procedure table before it starts working on the EM text. Hence there is an ordering problem, which can be solved easily by putting the procedure table in a separate file. Similarly, the data block table is kept in a file of its own.

The control flow graphs (CFGs) could be mixed with the EM text. Rather, we have chosen to put them in a separate file too. The control flow graph file should be regarded as a file that imposes some structure on the EM-text file, just as an overhead sheet containing a picture of a Flow Chart may be put on an overhead sheet containing statements. The loop tables are also put in the CFG file. A loop imposes an extra structure on the CFGs and hence on the EM text. So there are four files:

|

- |

the EM-text file |

|

- |

the procedure table file |

|

- |

the object table file |

|

- |

the CFG and loop tables file |

Every table is preceded by its length, in order to tell where it ends. The CFG file also contains the number of instructions of every basic block, indicating which part of the EM text belongs to that block.

syntax

The first phase of the global optimizer, called IC, constructs a major part of the intermediate code. To be specific, it produces:

|

- |

the EM text |

|

- |

the object table |

|

- |

part of the procedure table |

The calling, change and use attributes of a procedure and all its flags except the external and bodyseen flags are computed by the next phase (Control Flow phase).

As explained before, the intermediate code does not contain any names of variables or procedures. The normal identifiers are replaced by identifying numbers. Yet, the output of the global optimizer must contain normal identifiers, as this output is in Compact Assembly Language format. We certainly want all externally visible names to be the same in the input as in the output, because the optimized EM module may be a library unit, used by other modules. IC dumps the names of all procedures and data labels on two files:

|

- |

the procedure dump file, containing tuples (P_ID, procedure name) |

|

- |

the data dump file, containing tuples (D_ID, data label name) |

The names of instruction labels are not dumped, as they are not visible outside the procedure in which they are defined.

The input to IC consists of one or more files. Each file is either an EM module in Compact Assembly Language format, or a Unix archive file (library) containing such modules. IC only extracts those modules from a library that are needed somehow, just as a linker does. It is advisable to present as much code of the EM program as possible to the optimizer, although it is not required to present the whole program. If a procedure is called somewhere in the EM text, but its body (text) is not included in the input, its bodyseen flag in the procedure table will still be off. Whenever such a procedure is called, we assume the worst case for everything; it will change and use all variables it has access to, it will call every procedure etc.

Similarly, if a data label is used but not defined, the PSEUDO attribute in its data block will be set to UNKNOWN.

Part of the code for the EM Peephole Optimizer [b] has been used for IC. Especially the routines that read and unravel Compact Assembly Language and the identifier lookup mechanism have been used. New code was added to recognize objects, build the object and procedure tables and to output the intermediate code.

IC uses singly linked linear lists for both the procedure and object table. Hence there are no limits on the size of such a table (except for the trivial fact that it must fit in main memory). Both tables are outputted after all EM code has been processed. IC reads the EM text of one entire procedure at a time, processes it and appends the modified code to the EM text file. EM code is represented internally as a doubly linked linear list of EM instructions.

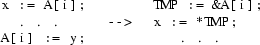

Objects are recognized by looking at the operands of instructions that reference global data. If we come across the instructions:

we conclude that the data block preceded by the data label X contains an object at offset 6 of size twice the word size, and an object at offset 20 of unknown size.

A data block entry of the object table is allocated at the first reference to a data label. If this reference is a defining occurrence or a INA pseudo instruction, the label is not externally visible [Tane83a, section 11.1.4.3] In this case, the external flag of the data block is turned off. If the first reference is an applied occurrence or a EXA pseudo instruction, the flag is set. We record this information, because the optimizer may change the order of defining and applied occurrences. The INA and EXA pseudos are removed from the EM text. They may be regenerated by the last phase of the optimizer.

Similar rules hold for the procedure table and the INP and EXP pseudos.

The source files of IC consist of the files ic.c, ic.h and several packages. ic.h contains type definitions, macros and variable declarations that may be used by ic.c and by every package. ic.c contains the definitions of these variables, the procedure main and some high level I/O routines used by main.

Every package xxx consists of two files. ic_xxx.h contains type definitions, macros, variable declarations and procedure declarations that may be used by every .c file that includes this .h file. The file ic_xxx.c provides the definitions of these variables and the implementation of the declared procedures. IC uses the following packages:

|

lookup: |

procedures that loop up procedure, data label and instruction label names; procedures to dump the procedure and data label names. |

|

lib: |

one procedure that gets the next useful input module; while scanning archives, it skips unnecessary modules. |

|

aux: |

several auxiliary routines. |

|

io: |

low-level I/O routines that unravel the Compact Assembly Language. |

|

put: |

routines that output the intermediate code |

In the previous chapter we described the intermediate code of the global optimizer. We also specified which part of this code was constructed by the IC phase of the optimizer. The Control Flow Phase (CF) does the remainder of the job, i.e. it determines:

|

- |

the control flow graphs |

|

- |

the loop tables |

|

- |

the calling, change and use attributes of the procedure table entries |

CF operates on one procedure at a time. For every procedure it first reads the EM instructions from the EM-text file and groups them into basic blocks. For every basic block, its successors and predecessors are determined, resulting in the control flow graph. Next, the immediate dominator of every basic block is computed. Using these dominators, any loop in the procedure is detected. Finally, interprocedural analysis is done, after which we will know the global effects of every procedure call on its environment.

CF uses the same internal data structures for the procedure table and object table as IC.

With regard to flow of control, we distinguish three kinds of EM instructions: jump instructions, instruction label definitions and normal instructions. Jump instructions are all conditional or unconditional branch instructions, the case instructions (CSA/CSB) and the RET (return) instruction. A procedure call (CAL) is not considered to be a jump. A defining occurrence of an instruction label is regarded as an EM instruction.

An instruction starts a new basic block, in any of the following cases:

|

1. |

It is the first instruction of a procedure |

|

2. |

It is the first of a list of instruction label defining occurrences |

|

3. |

It follows a jump |

If there are several consecutive instruction labels (which is highly unusual), all of them are put in the same basic block. Note that several cases may overlap, e.g. a label definition at the beginning of a procedure or a label following a jump.

A simple Finite State Machine is used to model the above rules. It also recognizes the end of a procedure, marked by an END pseudo. The basic blocks are stored internally as a doubly linked linear list. The blocks are linked in textual order. Every node of this list has the attributes described in the previous chapter (see syntax rule for basic_block). Furthermore, every node contains a pointer to its EM instructions, which are represented internally as a linear, doubly linked list, just as in the IC phase. However, instead of one list per procedure (as in IC) there is now one list per basic block.

On the fly, a table is build that maps every label identifier to the label definition instruction. This table is used for computing the control flow. The table is stored as a dynamically allocated array. The length of the array is the number of labels of the current procedure; this value can be found in the procedure table, where it was stored by IC.

A successor of a basic block B is a block C that can be executed immediately after B. C is said to be a predecessor of B. A block ending with a RET instruction has no successors. Such a block is called a return block. Any block that has no predecessors cannot be executed at all (i.e. it is unreachable), unless it is the first block of a procedure, called the procedure entry block.

Internally, the successor and predecessor attributes of a basic block are stored as sets. Alternatively, one may regard all these sets of all basic blocks as a conceptual graph, in which there is an edge from B to C if C is in the successor set of B. We call this conceptual graph the Control Flow Graph.

The only successor of a basic block ending on an unconditional branch instruction is the block that contains the label definition of the target of the jump. The target instruction can be found via the LAB_ID that is the operand of the jump instruction, by using the label-map table mentioned above. If the last instruction of a block is a conditional jump, the successors are the target block and the textually next block. The last instruction can also be a case jump instruction (CSA or CSB). We then analyze the case descriptor, to find all possible target instructions and their associated blocks. We require the case descriptor to be allocated in a ROM, so it cannot be changed dynamically. A case jump via an alterable descriptor could in principle go to any label in the program. In the presence of such an uncontrolled jump, hardly any optimization can be done. We do not expect any front end to generate such a descriptor, however, because of the controlled nature of case statements in high level languages. If the basic block does not end in a jump instruction, its only successor is the textually next block.

A basic block B dominates a block C if every path in the control flow graph from the procedure entry block to C goes through B. The immediate dominator of C is the closest dominator of C on any path from the entry block. See also [Aho78a, section 13.1.]

There are a number of algorithms to compute the immediate dominator relation.

|

1. |

Purdom and Moore give an algorithm that is easy to program and easy to describe (although the description they give is unreadable; it is given in a very messy Algol60 program full of gotos). [Purd72a] |

|

2. |

Aho and Ullman present a bitvector algorithm, which is also easy to program and to understand. (See [Aho78a, section 13.1.]). |

|

3 |

Lengauer and Tarjan introduce a fast algorithm that is hard to understand, yet remarkably easy to implement. [Leng79a] |

The Purdom-Moore algorithm is very slow if the number of basic blocks in the flow graph is large. The Aho-Ullman algorithm in fact computes the dominator relation, from which the immediate dominator relation can be computed in time quadratic to the number of basic blocks, worst case. The storage requirement is also quadratic to the number of blocks. The running time of the third algorithm is proportional to:

(number of edges in the graph) * log(number of blocks).

We have chosen this algorithm because it is fast (as shown by experiments done by Lengauer and Tarjan), it is easy to program and requires little data space.

Loops are detected by using the loop construction algorithm of. [Aho78a, section 13.1.] This algorithm uses back edges. A back edge is an edge from B to C in the CFG, whose head (C) dominates its tail (B). The loop associated with this back edge consists of C plus all nodes in the CFG that can reach B without going through C.

As an example of how the algorithm works, consider the piece of program of Fig. 4.1. First just look at the program and try to see what part of the code constitutes the loop.

loop

if cond then 1

-- lots of simple

-- assignment

-- statements 2 3

exit; -- exit loop

else

S; -- one statement

end if;

end loop;

Fig. 4.1 A misleading loop

Although a human being may be easily deceived by the brackets "loop" and "end loop", the loop detection algorithm will correctly reply that only the test for "cond" and the single statement in the false-part of the if statement are part of the loop! The statements in the true-part only get executed once, so there really is no reason at all to say they’re part of the loop too. The CFG contains one back edge, "3->1". As node 3 cannot be reached from node 2, the latter node is not part of the loop.

A source of problems with the algorithm is the fact that different back edges may result in the same loop. Such an ill-structured loop is called a messy loop. After a loop has been constructed, it is checked if it is really a new loop.

Loops can partly overlap, without one being nested inside the other. This is the case in the program of Fig. 4.2.

1: 1

S1;

2:

S2; 2

if cond then

goto 4;

S3; 3 4

goto 1;

4:

S4;

goto 1;

Fig. 4.2 Partly overlapping loops

There are two back edges "3->1" and "4->1", resulting in the loops {1,2,3} and {1,2,4}. With every basic block we associate a set of all loops it is part of. It is not sufficient just to record its most enclosing loop.

After all loops of a procedure are detected, we determine the nesting level of every loop. Finally, we find all strong and firm blocks of the loop. If the loop has only one back edge (i.e. it is not messy), the set of firm blocks consists of the head of this back edge and its dominators in the loop (including the loop entry block). A firm block is also strong if it is not a successor of a block that may exit the loop; a block may exit a loop if it has an (immediate) successor that is not part of the loop. For messy loops we do not determine the strong and firm blocks. These loops are expected to occur very rarely.

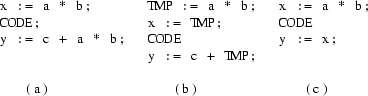

It is often desirable to know the effects a procedure call may have. The optimization below is only possible if we know for sure that the call to P cannot change A.

Although it is not possible to predict exactly all the effects a procedure call has, we may determine a kind of upper bound for it. So we compute all variables that may be changed by P, although they need not be changed at every invocation of P. We can get hold of this set by just looking at all assignment (store) instructions in the body of P. EM also has a set of indirect assignment instructions, i.e. assignment through a pointer variable. In general, it is not possible to determine which variable is affected by such an assignment. In these cases, we just record the fact that P does an indirect assignment. Note that this does not mean that all variables are potentially affected, as the front ends may generate messages telling that certain variables can never be accessed indirectly. We also set a flag if P does a use (load) indirect. Note that we only have to look at global variables. If P changes or uses any of its locals, this has no effect on its environment. Local variables of a lexically enclosing procedure can only be accessed indirectly.

A procedure P may of course call another procedure. To determine the effects of a call to P, we also must know the effects of a call to the second procedure. This second one may call a third one, and so on. Effectively, we need to compute the transitive closure of the effects. To do this, we determine for every procedure which other procedures it calls. This set is the "calling" attribute of a procedure. One may regard all these sets as a conceptual graph, in which there is an edge from P to Q if Q is in the calling set of P. This graph will be referred to as the call graph. (Note the resemblance with the control flow graph).

We can detect which procedures are called by P by looking at all CAL instructions in its body. Unfortunately, a procedure may also be called indirectly, via a CAI instruction. Yet, only procedures that are used as operand of an LPI instruction can be called indirect, because this is the only way to take the address of a procedure. We determine for every procedure whether it does a CAI instruction. We also build a set of all procedures used as operand of an LPI.

After all procedures have been processed (i.e. all CFGs are constructed, all loops are detected, all procedures are analyzed to see which variables they may change, which procedures they call, whether they do a CAI or are used in an LPI) the transitive closure of all interprocedural information is computed. During the same process, the calling set of every procedure that uses a CAI is extended with the above mentioned set of all procedures that can be called indirect.

The sources of CF are in the following files and packages:

|

cf.h: |

declarations of global variables and data structures |

|

cf.c: |

the routine main; interprocedural analysis; transitive closure |

|

succ: |

control flow (successor and predecessor) |

|

idom: |

immediate dominators |

|

loop: |

loop detection |

|

get: |

read object and procedure table; read EM text and partition it into basic blocks |

|

put: |

write tables, CFGs and EM text |

The Inline Substitution technique (IL) tries to decrease the overhead associated with procedure calls (invocations). During a procedure call, several actions must be undertaken to set up the right environment for the called procedure. [John81a] On return from the procedure, most of these effects must be undone. This entire process introduces significant costs in execution time as well as in object code size.

The inline substitution technique replaces some of the calls by the modified body of the called procedure, hence eliminating the overhead. Furthermore, as the calling and called procedure are now integrated, they can be optimized together, using other techniques of the optimizer. This often leads to extra opportunities for optimization [Ball79a, Cart77a, Sche77a]

An inline substitution of a call to a procedure P increases the size of the program, unless P is very small or P is called only once. In the latter case, P can be eliminated. In practice, procedures that are called only once occur quite frequently, due to the introduction of structured programming. (Carter [Cart82a] states that almost 50% of the Pascal procedures he analyzed were called just once).

Scheifler [Sche77a] has a more general view of inline substitution. In his model, the program under consideration is allowed to grow by a certain amount, i.e. code size is sacrificed to speed up the program. The above two cases are just special cases of his model, obtained by setting the size-change to (approximately) zero. He formulates the substitution problem as follows:

|

"Given a program, a subset of all invocations, a maximum program size, and a maximum procedure size, find a sequence of substitutions that minimizes the expected execution time." |

Scheifler shows that this problem is NP-complete [Aho74a, chapter 10] by reduction to the Knapsack Problem. Heuristics will have to be used to find a near-optimal solution.

In the following chapters we will extend Scheifler’s view and adapt it to the EM Global Optimizer. We will first describe the transformations that have to be applied to the EM text when a call is substituted in line. Next we will examine in which cases inline substitution is not possible or desirable. Heuristics will be developed for chosing a good sequence of substitutions. These heuristics make no demand on the user (such as making profiles [Sche77a] or giving pragmats [Ichb83a, section 6.3.2]), although the model could easily be extended to use such information. Finally, we will discuss the implementation of the IL phase of the optimizer.

We will often use the term inline expansion as a synonym

of inline substitution.

The inverse technique of procedure abstraction (automatic

subroutine generation) [Shaf78a] will not be discussed in

this report.

In the EM calling sequence, the calling procedure pushes its parameters on the stack before doing the CAL. The called routine first saves some status information on the stack and then allocates space for its own locals (also on the stack). Usually, one special purpose register, the Local Base (LB) register, is used to access both the locals and the parameters. If memory is highly segmented, the stack frames of the caller and the callee may be allocated in different fragments; an extra Argument Base (AB) register is used in this case to access the actual parameters. See 4.2 of [Tane83a] for further details.

If a procedure call is expanded in line, there are two problems:

|

1. |

No stack frame will be allocated for the called procedure; we must find another place to put its locals. |

|

2. |

The LB register cannot be used to access the actual parameters; as the CAL instruction is deleted, the LB will still point to the local base of the calling procedure. |

The local variables of the called procedure will be put in the stack frame of the calling procedure, just after its own locals. The size of the stack frame of the calling procedure will be increased during its entire lifetime. Therefore our model will allow a limit to be set on the number of bytes for locals that the called procedure may have (see next section).

There are several alternatives to access the parameters.

An actual parameter may be any auxiliary expression, which

we will refer to as the actual parameter expression.

The value of this expression is stored in a location on the

stack (see above), the parameter location.

The alternatives for accessing parameters are:

|

- |

save the value of the stackpointer at the point of the CAL in a temporary variable X; this variable can be used to simulate the AB register, i.e. parameter locations are accessed via an offset to the value of X. |

|

- |

create a new temporary local variable T for the parameter (in the stack frame of the caller); every access to the parameter location must be changed into an access to T. |

|

- |

do not evaluate the actual parameter expression before the call; instead, substitute this expression for every use of the parameter location. |

The first method may be expensive if X is not put in a

register. We will not use this method. The time required to

evaluate and access the parameters when the second method is

used will not differ much from the normal calling sequence

(i.e. not in line call). It is not expensive, but there are

no extra savings either. The third method is essentially the

’by name’ parameter mechanism of Algol60. If the

actual parameter is just a numeric constant, it is

advantageous to use it. Yet, there are several circumstances

under which it cannot or should not be used. We will deal

with this in the next section.

In general we will use the third method, if it is possible

and desirable. Such parameters will be called in line

parameters. In all other cases we will use the second

method.

Feasibility and desirability analysis of in line substitution differ somewhat from most other techniques. Usually, much effort is needed to find a feasible opportunity for optimization (e.g. a redundant subexpression). Desirability analysis then checks if it is really advantageous to do the optimization. For IL, opportunities are easy to find. To see if an in line expansion is desirable will not be hard either. Yet, the main problem is to find the most desirable ones. We will deal with this problem later and we will first attend feasibility and desirability analysis.

There are several reasons why a procedure invocation cannot or should not be expanded in line.

A call to a procedure P cannot be expanded in line in any of the following cases:

|

1. |

The body of P is not available as EM text. Clearly, there is no way to do the substitution. |

|

2. |

P, or any procedure called by P (transitively), follows the chain of statically enclosing procedures (via a LXL or LXA instruction) or follows the chain of dynamically enclosing procedures (via a DCH). If the call were expanded in line, one level would be removed from the chains, leading to total chaos. This chaos could be solved by patching up every LXL, LXA or DCH in all procedures that could be part of the chains, but this is hard to implement. |

|

3. |

P, or any procedure called by P (transitively), calls a procedure whose body is not available as EM text. The unknown procedure may use an LXL, LXA or DCH. However, in several languages a separately compiled procedure has no access to the static or dynamic chain. In this case this point does not apply. |

|

4. |

P, or any procedure called by P (transitively), uses the LPB instruction, which converts a local base to an argument base; as the locals and parameters are stored in a non-standard way (differing from the normal EM calling sequence) this instruction would yield incorrect results. |

|

5. |

The total number of bytes of the parameters of P is not known. P may be a procedure with a variable number of parameters or may have an array of dynamic size as value parameter. |

It is undesirable to expand a call to a procedure P in line in any of the following cases:

|

1. |

P is large, i.e. the number of EM instructions of P exceeds some threshold. The expanded code would be large too. Furthermore, several programs in ACK, including the global optimizer itself, may run out of memory if they they have to run in a small address space and are provided very large procedures. The threshold may be set to infinite, in which case this point does not apply. |

|

2. |

P has many local variables. All these variables would have to be allocated in the stack frame of the calling procedure. |

If a call may be expanded in line, we have to decide how to access its parameters. In the previous section we stated that we would use in line parameters whenever possible and desirable. There are several reasons why a parameter cannot or should not be expanded in line.

No parameter of a procedure P can be expanded in line, in any of the following cases:

|

1. |

P, or any procedure called by P (transitively), does a store-indirect or a use-indirect (i.e. through a pointer). However, if the front-end has generated messages telling that certain parameters can not be accessed indirectly, those parameters may be expanded in line. |

|

2. |

P, or any procedure called by P (transitively), calls a procedure whose body is not available as EM text. The unknown procedure may do a store-indirect or a use-indirect. However, the same remark about front-end messages as for 1. holds here. |

|

3. |

The address of a parameter location is taken (via a LAL). In the normal calling sequence, all parameters are stored sequentially. If the address of one parameter location is taken, the address of any other parameter location can be computed from it. Hence we must put every parameter in a temporary location; furthermore, all these locations must be in the same order as for the normal calling sequence. |

|

4. |

P has overlapping parameters; for example, it uses the parameter at offset 10 both as a 2 byte and as a 4 byte parameter. Such code may be produced by the front ends if the formal parameter is of some record type with variants. |

Sometimes a specific parameter must not be expanded in

line.

An actual parameter expression cannot be expanded in line in

any of the following cases:

|

1. |

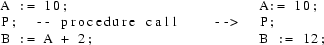

P stores into the parameter location. Even if the actual parameter expression is a simple variable, it is incorrect to change the ’store into formal’ into a ’store into actual’, because of the parameter mechanism used. In Pascal, the following expansion is incorrect: |

procedure p (x:integer);

begin

x := 20;

end;

...

a := 10; a := 10;

p(a); ---> a := 20;

write(a); write(a);

|

|

2. |

P changes any of the operands of the actual parameter expression. If the expression is expanded and evaluated after the operand has been changed, the wrong value will be used. |

|

3. |

The actual parameter expression has side effects. It must be evaluated only once, at the place of the call. |

It is undesirable to expand an actual parameter in line in the following case:

|

1. |

The parameter is used more than once (dynamically) and the actual parameter expression is not just a simple variable or constant. |

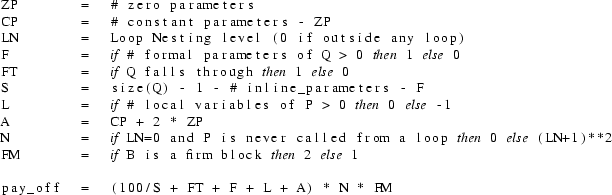

Using the information described in the previous section, we can find all calls that can be expanded in line, and for which this expansion is desirable. In general, we cannot expand all these calls, so we have to choose the ’best’ ones. With every CAL instruction that may be expanded, we associate a pay off, which expresses how desirable it is to expand this specific CAL.

Let Tc denote the portion of EM text involved in a specific call, i.e. the pushing of the actual parameter expressions, the CAL itself, the popping of the parameters and the pushing of the result (if any, via an LFR). Let Te denote the EM text that would be obtained by expanding the call in line. Let Pc be the original program and Pe the program with Te substituted for Tc. The pay off of the CAL depends on two factors:

|

- |

T = execution_time(Pe) - execution_time(Pc) |

|

- |

S = code_size(Pe) - code_size(Pc) |

The change in execution time (T) depends on:

|

- |

T1 = execution_time(Te) - execution_time(Tc) |

|

- |

N = number of times Te or Tc get executed. |

We assume that T1 will be the same every time the code gets executed. This is a reasonable assumption. (Note that we are talking about one CAL, not about different calls to the same procedure). Hence

T = N * T1

T1 can be estimated by a careful analysis of the transformations that are performed. Below, we list everything that will be different when a call is expanded in line:

|

- |

The CAL instruction is not executed. This saves a subroutine jump. |

|

- |

The instructions in the procedure prolog are not executed. These instructions, generated from the PRO pseudo, save some machine registers (including the old LB), set the new LB and allocate space for the locals of the called routine. The savings may be less if there are no locals to allocate. |

|

- |

In line parameters are not evaluated before the call and are not pushed on the stack. |

|

- |

All remaining parameters are stored in local variables, instead of being pushed on the stack. |

|

- |

If the number of parameters is nonzero, the ASP instruction after the CAL is not executed. |

|

- |

Every reference to an in line parameter is substituted by the parameter expression. |

|

- |

RET (return) instructions are replaced by BRA (branch) instructions. If the called procedure ’falls through’ (i.e. it has only one RET, at the end of its code), even the BRA is not needed. |

|

- |

The LFR (fetch function result) is not executed |

Besides these changes, which are caused directly by IL, other changes may occur as IL influences other optimization techniques, such as Register Allocation and Constant Propagation. Our heuristic rules do not take into account the quite inpredictable effects on Register Allocation. It does, however, favour calls that have numeric constants as parameter; especially the constant "0" as an inline parameter gets high scores, as further optimizations may often be possible.

It cannot be determined statically how often a CAL instruction gets executed. We will use loop nesting information here. The nesting level of the loop in which the CAL appears (if any) will be used as an indication for the number of times it gets executed.

Based on all these facts, the pay off of a call will be computed. The following model was developed empirically. Assume procedure P calls procedure Q. The call takes place in basic block B.

S stands for the size increase of the program, which is slightly less than the size of Q. The size of a procedure is taken to be its number of (non-pseudo) EM instructions. The terms "loop nesting level" and "firm" were defined in the chapter on the Intermediate Code (section "loop tables"). If a call is not inside a loop and the calling procedure is itself never called from a loop (transitively), then the call will probably be executed at most once. Such a call is never expanded in line (its pay off is zero). If the calling procedure doesn’t have local variables, a penalty (L) is introduced, as it will most likely get local variables if the call gets expanded.

A major factor in the implementation of Inline Substitution is the requirement not to use an excessive amount of memory. IL essentially analyzes the entire program; it makes decisions based on which procedure calls appear in the whole program. Yet, because of the memory restriction, it is not feasible to read the entire program in main memory. To solve this problem, the IL phase has been split up into three subphases that are executed sequentially:

|

1. |

analyze every procedure; see how it accesses its parameters; simultaneously collect all calls appearing in the whole program an put them in a call-list. |

|

2. |

use the call-list and decide which calls will be substituted in line. |

|

3. |

take the decisions of subphase 2 and modify the program accordingly. |

Subphases 1 and 3 scan the input program; only subphase 3 modifies it. It is essential that the decisions can be made in subphase 2 without using the input program, provided that subphase 1 puts enough information in the call-list. Subphase 2 keeps the entire call-list in main memory and repeatedly scans it, to find the next best candidate for expansion.

We will specify the data structures used by IL before describing the subphases.

In subphase 1 information is gathered about every procedure and added to the procedure table. This information is used by the heuristic rules. A proctable entry for procedure p has the following extra information:

|

- |

is it allowed to substitute an invocation of p in line? |

|

- |

is it allowed to put any parameter of such a call in line? |

|

- |

the size of p (number of EM instructions) |

|

- |

does p ’fall through’? |

|

- |

a description of the formal parameters that p accesses; this information is obtained by looking at the code of p. For every parameter f, we record: |

|

- |

the offset of f |

|

- |

the type of f (word, double word, pointer) |

|

- |

may the corresponding actual parameter be put in line? |

|

- |

is f ever accessed indirectly? |

|

- |

if f used: never, once or more than once? |

|

- |

the number of times p is called (see below) |

|

- |

the file address of its call-count information (see below). |

As a result of Inline Substitution, some procedures may become useless, because all their invocations have been substituted in line. One of the tasks of IL is to keep track which procedures are no longer called. Note that IL is especially keen on procedures that are called only once (possibly as a result of expanding all other calls to it). So we want to know how many times a procedure is called during Inline Substitution. It is not good enough to compute this information afterwards. The task is rather complex, because the number of times a procedure is called varies during the entire process:

|

1. |

If a call to p is substituted in line, the number of calls to p gets decremented by 1. |

|

2. |

If a call to p is substituted in line, and p contains n calls to q, then the number of calls to q gets incremented by n. |

|

3. |

If a procedure p is removed (because it is no longer called) and p contains n calls to q, then the number of calls to q gets decremented by n. |

(Note that p may be the same as q, if p is

recursive).

So we actually want to have the following information:

NRCALL(p,q) = number of call to q appearing in p,

for all procedures p and q that may be put in line.

This information, called call-count information is computed by the first subphase. It is stored in a file. It is represented as a number of lists, rather than as a (very sparse) matrix. Every procedure has a list of (proc,count) pairs, telling which procedures it calls, and how many times. The file address of its call-count list is stored in its proctable entry. Whenever this information is needed, it is fetched from the file, using direct access. The proctable entry also contains the number of times a procedure is called, at any moment.

The call-list is the major data structure use by IL. Every item of the list describes one procedure call. It contains the following attributes:

|

- |

the calling procedure (caller) |

|

- |

the called procedure (callee) |

|

- |

identification of the CAL instruction (sequence number) |

|

- |

the loop nesting level; our heuristic rules appreciate calls inside a loop (or even inside a loop nested inside another loop, etc.) more than other calls |

|

- |

the actual parameter expressions involved in the call; for every actual, we record: |

|

- |

the EM code of the expression |

|

- |

the number of bytes of its result (size) |

|

- |

an indication if the actual may be put in line |

The structure of the call-list is rather complex. Whenever a call is expanded in line, new calls will suddenly appear in the program, that were not contained in the original body of the calling subroutine. These calls are inherited from the called procedure. We will refer to these invocations as nested calls (see Fig. 5.1).

Fig. 5.1 Example of nested procedure calls

Nested calls may subsequently be put in line too

(probably resulting in a yet deeper nesting level, etc.). So

the call-list does not always reflect the source program,

but changes dynamically, as decisions are made. If a call to

p is expanded, all calls appearing in p will be added to the

call-list.

A convenient and elegant way to represent the call-list is

to use a LISP-like list. [Poel72a] Calls that appear at the

same level are linked in the CDR direction. If a call C to a

procedure p is expanded, all calls appearing in p are put in

a sub-list of C, i.e. in its CAR. In the example above,

before the decision to expand the call to p is made, the

call-list of procedure r looks like:

(call-to-x, call-to-p, call-to-y)

After the decision, it looks like:

(call-to-x, (call-to-p*, call-to-a, call-to-b), call-to-y)

The call to p is marked, because it has been substituted. Whenever IL wants to traverse the call-list of some procedure, it uses the well-known LISP technique of recursion in the CAR direction and iteration in the CDR direction (see page 1.19-2 of [Poel72a] ). All list traversals look like:

traverse(list)

{

for (c = first(list); c != 0; c = CDR(c)) {

if (c is marked) {

traverse(CAR(c));

} else {

do something with c

}

}

}

The entire call-list consists of a number of LISP-like lists, one for every procedure. The proctable entry of a procedure contains a pointer to the beginning of the list.

The tasks of the first subphase are to determine several attributes of every procedure and to construct the basic call-list, i.e. without nested calls. The size of a procedure is determined by simply counting its EM instructions. Pseudo instructions are skipped. A procedure does not ’fall through’ if its CFG contains a basic block that is not the last block of the CFG and that ends on a RET instruction. The formal parameters of a procedure are determined by inspection of its code.

The call-list in constructed by looking at all CAL instructions appearing in the program. The call-list should only contain calls to procedures that may be put in line. This fact is only known if the procedure was analyzed earlier. If a call to a procedure p appears in the program before the body of p, the call will always be put in the call-list. If p is later found to be unsuitable, the call will be removed from the list by the second subphase.

An important issue is the recognition of the actual

parameter expressions of the call. The front ends produces

messages telling how many bytes of formal parameters every

procedure accesses. (If there is no such message for a

procedure, it cannot be put in line). The actual parameters

together must account for the same number of bytes.A

recursive descent parser is used to parse side-effect free

EM expressions. It uses a table and some auxiliary routines

to determine how many bytes every EM instruction pops from

the stack and how many bytes it pushes onto the stack. These

numbers depend on the EM instruction, its argument, and the

wordsize and pointersize of the target machine. Initially,

the parser has to recognize the number of bytes specified in

the formals-message, say N. Assume the first instruction

before the CAL pops S bytes and pushes R bytes. If R > N,

too many bytes are recognized and the parser fails. Else, it

calls itself recursively to recognize the S bytes used as

operand of the instruction. If it succeeds in doing so, it

continues with the next instruction, i.e. the first

instruction before the code recognized by the recursive

call, to recognize N-R more bytes. The result is a number of

EM instructions that collectively push N bytes. If an

instruction is come across that has side-effects (e.g. a

store or a procedure call) or of which R and S cannot be

computed statically (e.g. a LOS), it fails.

Note that the parser traverses the code backwards. As EM

code is essentially postfix code, the parser works top

down.

If the parser fails to recognize the parameters, the call will not be substituted in line. If the parameters can be determined, they still have to match the formal parameters of the called procedure. This check is performed by the second subphase; it cannot be done here, because it is possible that the called procedure has not been analyzed yet.

The entire call-list is written to a file, to be processed by the second subphase.

The task of the second subphase is quite easy to understand. It reads the call-list file, builds an incore call-list and deletes every call that may not be expanded in line (either because the called procedure may not be put in line, or because the actual parameters of the call do not match the formal parameters of the called procedure). It assigns a pay-off to every call, indicating how desirable it is to expand it.

The subphase repeatedly scans the call-list and takes

the call with the highest ratio. The chosen one gets marked,

and the call-list is extended with the nested calls, as

described above. These nested calls are also assigned a

ratio, and will be considered too during the next scans.

After every decision the number of times every procedure is

called is updated, using the call-count information.

Meanwhile, the subphase keeps track of the amount of space

left available. If all space is used, or if there are no

more calls left to be expanded, it exits this loop. Finally,

calls to procedures that are called only once are also

chosen.

The actual parameters of a call are only needed by this subphase to assign a ratio to a call. To save some space, these actuals are not kept in main memory. They are removed after the call has been read and a ratio has been assigned to it. So this subphase works with abstracts of calls. After all work has been done, the actual parameters of the chosen calls are retrieved from a file, as they are needed by the transformation subphase.

The third subphase makes the actual modifications to the EM text. It is directed by the decisions made in the previous subphase, as expressed via the call-list. The call-list read by this subphase contains only calls that were selected for expansion. The list is ordered in the same way as the EM text, i.e. if a call C1 appears before a call C2 in the call-list, C1 also appears before C2 in the EM text. So the EM text is traversed linearly, the calls that have to be substituted are determined and the modifications are made. If a procedure is come across that is no longer needed, it is simply not written to the output EM file. The substitution of a call takes place in distinct steps:

change the calling sequence

|

The actual parameter expressions are changed. Parameters that are put in line are removed. All remaining ones must store their result in a temporary local variable, rather than push it on the stack. The CAL instruction and any ASP (to pop actual parameters) or LFR (to fetch the result of a function) are deleted. |

fetch the text of the called procedure

|

Direct disk access is used to to read the text of the called procedure. The file offset is obtained from the proctable entry. |

allocate bytes for locals and temporaries

|

The local variables of the called procedure will be put in the stack frame of the calling procedure. The same applies to any temporary variables that hold the result of parameters that were not put in line. The proctable entry of the caller is updated. |

put a label after the CAL

|

If the called procedure contains a RET (return) instruction somewhere in the middle of its text (i.e. it does not fall through), the RET must be changed into a BRA (branch), to jump over the remainder of the text. This label is not needed if the called procedure falls through. |

copy the text of the called procedure and modify it

|

References to local variables of the called routine and to parameters that are not put in line are changed to refer to the new local of the caller. References to in line parameters are replaced by the actual parameter expression. Returns (RETs) are either deleted or replaced by a BRA. Messages containing information about local variables or parameters are changed. Global data declarations and the PRO and END pseudos are removed. Instruction labels and references to them are changed to make sure they do not have the same identifying number as labels in the calling procedure. |

insert the modified text

|

The pseudos of the called procedure are put after the pseudos of the calling procedure. The real text of the callee is put at the place where the CAL was. |

take care of nested substitutions

|

The expanded procedure may contain calls that have to be expanded too (nested calls). If the descriptor of this call contains actual parameter expressions, the code of the expressions has to be changed the same way as the code of the callee was changed. Next, the entire process of finding CALs and doing the substitutions is repeated recursively. |

The sources of IL are in the following files and packages (the prefixes 1_, 2_ and 3_ refer to the three subphases):

|

il.h: |

declarations of global variables and data structures |

|

il.c: |

the routine main; the driving routines of the three subphases |

|

1_anal: |

contains a subroutine that analyzes a procedure |

|

1_cal: |

contains a subroutine that analyzes a call |

|

1_aux: |

implements auxiliary procedures used by subphase 1 |

|

2_aux: |

implements auxiliary procedures used by subphase 2 |

|

3_subst: |

the driving routine for doing the substitution |

|

3_change: |

lower level routines that do certain modifications |

|

3_aux: |

implements auxiliary procedures used by subphase 3 |

|

aux: |

implements auxiliary procedures used by several subphases. |

The Strength Reduction optimization technique (SR) tries to replace expensive operators by cheaper ones, in order to decrease the execution time of the program. A classical example is replacing a ’multiplication by 2’ by an addition or a shift instruction. These kinds of local transformations are already done by the EM Peephole Optimizer. Strength reduction can also be applied more generally to operators used in a loop.

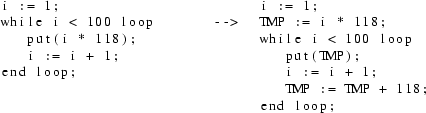

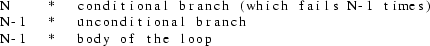

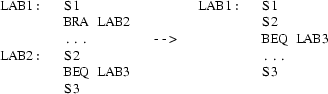

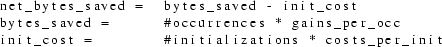

Fig. 6.1 An example of Strenght Reduction

In Fig. 6.1, a multiplication inside a loop is replaced by an addition inside the loop and a multiplication outside the loop. Clearly, this is a global optimization; it cannot be done by a peephole optimizer.

In some cases a related technique, test

replacement, can be used to eliminate the loop variable

i. This technique will not be discussed in this report.

In the example above, the resulting code can be further

optimized by using constant propagation. Obviously, this is

not the task of the Strength Reduction phase.

In this section we will describe the transformations performed by Strength Reduction (SR). Before doing so, we will introduce the central notion of an induction variable.